For SMB and >=10G you need some tunings on Solarish and Windows as the base settings are optimized for 1G, see

http://napp-it.org/doc/downloads/performance_smb2.pdf

Currently Solarish supports SMB 2.1 and the Solaris kernelbased SMB server with Oracle Solaris a little faster than the free Open-ZFS forks around Illumos. Nexenta adds SMB 3 to its commercial storage server. It may need some time until they upstream this to Illumos.

If you really need SMB 3 features, Windows may be faster and more stable than SAMBA with SMB3 or faster than the Solaris SMB 2.1 server regarding filesharing but I doubt regarding storage and ReFS.

regarding USB3

This is included in Oracle Solaris and nearly ready in Illumos/OmniOS

I always prefer NFS over iSCSI for ESXi. Similar performance with according sync/writeback setting and much easier to handle for copy/move/clone/backup as you can use SMB and Windows preivious version for VM management.

Exactly what I needed for SMB2.1 tuning thanks!

Its exciting to hear that SMB3 might be coming to OmniOS in the future. It's not a deal breaker for me, that the CIFS portion (which would only generally be used for async large sequential) would be somewhat limited by SMB2.1. (i.e. single threaded workload of me extracting / moving incoming FTP/Torrent data around from my desktop via the NAS, photo archive, movies/TV, ISOs, etc)

I'll 'just wait longer' on the USB3 as well, keep the PCI card in here, and just not put any devices on it, until its driver qualified.

I always prefer NFS over iSCSI for ESXi as well. If your "single volume / single share" presentation is fast enough for everyone / all the time, then there is benefit in to sharing free space. Additionally from a storage efficiency perspective, it would allow compression/dedupe to leverage all block space. (Kinda why I started on Windows 2012/2016 and tried CIFS/NFS from the same 'jumbo pool / jumbo file system'. When NFS puked, I reverted to iSCSI and it was OK (only because I was able to get stable SRP drivers from Win>ESX).

What was interesting over iSCSI, is I was seeing about 500MBs max read/write per VM per Datastore. So I just carved out like (8) 1TB Thin iSCSI Datastores, and used Storage DRS to balance the VMs based on IO requirements. This effectively gave me (8) separate IO streams to work with, and brought the overall bandwidth up from 500MB to 1-2GBs.

Same thing can apply for SMB2.1 -vs- SMB3 with RDMA.

SMB3 with RDMA is nice, because it multi-channels IO , even on a single IO stream, thus usually maximizing your network pipe, for all workloads.

SMB2.1 doesnt have that, so instead of using Windows Copy, use RoboCopy with as many threads as you want sort of band-aid, to get the overall bandwidth you have available. (assuming you are copying more than one file)

The question comes back to with ZFS and NFS, if you do a single mount point/datastore, you may only get a single disk queue buffer worth of bandwidth out of the network pipe. (without substantial NFS/TCP buffer tweaking)

However I suppose you could create several folders on the ZFS, mount them as separate datastores in ESX and still use Storage DRS. (And my 30TB free space will be seen 8x times, so it'll appear my ESX Lab has 240TB)

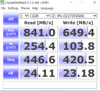

DOH, looks like I need to purchase the PRO license if I want to auto-tune, as the tuning guide points to a module that looks no longer free, and is now called advanced. I can get the values out of the screenshot and manually apply them (i think).