For the original enterprise SP3 socket, it theoretically supports all 3 gens of EPYC(But most vendors only let each board support two gens, due to BIOS flash capacity issue)

...

That is sadly true but with one exception, i can now say that the ASRACK RomeD8 2T does work with all 3 generations.

Not on the same bios of course and it drops support for Naples in the newest one for Milan, but IT DOES!

But whos fault is that? AMDs? well yes! The OEMs? Oh definitely YES! they want to sell new boards every generation.

And that is not just an issue with AMD hardware, but with Intel too of course!

For the desktop/WS version, let's see, x399, trx40, WRX80 are all especially made not to support the next batch of CPUs(Zen1, Zen2-X, Zen2-WX).

I don't see how that statement is actually true.

Because X399 had two generations of CPUs, TR 1900 and TR2900.

That is the usual two generations of support you see with any intel platform.

Of course i would like to have seen more generations of support for it, but i also can't remember that AMD promised anything in that regard.

Unlike with AM4 where they did and ****ed and bodged that up.

Additionally, TRX40 is technically not that different from x399, the only real difference i know beside pcie gen4 and its requirements, is the Chipset link width change from x4 to x8.

That inherently does not make this platform any less build to support the next generation of CPUs and for me, it actually does the oposite.

Actually WRX80 and TRX40 do support the next generation, it is just that AMD decided to cancel one of those.

Considering that WRX80 is basically a SP3 serverboard with an added Chipset, i 'don't see how that could be built to be obsolete and not support the next generation of CPUs.

And yes, i am sad that AMD canceled what was its name ? Chagall.

Would i have bought one? Likely not.

Maybe in two years time once they got affordable on the used market.

It's just that what customer decides what product being made. Large enterprises have their own complexed and reliable test methologies so they know exactly what AMD's unbalanced designs are good and bad at, thus made them rational and hard to bamboozle.

Bamboozled, yes marketing can be bamboozeling.

Good that companies care about their usecase and look at what fits that best.

1. One of my long time friend turned to work on drivers for AMD platforms, end up worked under an "AMD temporary worker". He asked me to remove a post mentioning him in a topic that's considered “negative” to AMD on Chiphell.

I don't really understand what you mean the way you have written it.

But if a friend where to write something on a forum and he mentions me more or less directly as a source, i'd likely have an issue with that as well.

So all i'm saying is that there doesn't need to be a conspiracy by AMD to cause someone to do that.

Just go to Bilibili, open any video concerning CPU posted before the release of intel 12th gen. Just see the sheer amount of "AMD YES" in the barrages and comments, and how many people use overclocked AMD cpu against intel's stock or even downed clock. Sometimes you will see people secretly down/over clocking memory or bus frequency to make up results.

3. Speaking of reviews, I'm not a believer of north bridge design when it comes gaming, or sayings like "intel performance regress over generations". For this, once, after I saw the reviews, I borrowed cpus and motherboards to test out myself.

No thanks, i will not go to bilibili and i don't care what someone said under some random Video.

Personally, i have not heard about "intel perf regresses" over generations unless you refer to the spectre and meltdown mittigations.

Then there comes the problem. My results met my expectation and almost the same as Techpowerup's(

AMD Ryzen 7 5800X Review). But why there were an attack on them and forced them to apologize and explain the situation in an AMD favored way?

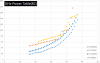

Here's one of my graphs, all locked at 4GHz.

That are some fine charts you made there.

As far as i understand those and the ones techpowerup made, i can't say that those are almost the same.

I can't even really find a distant relationship between them, though that might just be me looking at this at 5 in the morning.

Let me just say that actual benchmarking is surprisingly hard.

And Why are the results the way they are, is always the base question that should be asked and answered.

With that in mind, i noticed some questionable things in the Techpoweup charts.

In Regards to your charts the only two questions i have is why you capped the clocks to 4Ghz and why you chose those games specifically and to represent what?

But on the outside AMD fans are famously notorious, fabricating test data and bending truths with their explanation to their needs, while swarm attacking everyone who have second thoughts. There are also a lot of evidences indicating AMD paying independent reviewers and commenters for its reputation campaign. If you've read "the crowd", you should already know why they did all of this, and why some people just buy it.

Oh **** fans of any kind.

Fandom for big tech companies is a fricken bad idea.

But everything you said in that Quote specifically, i think you can swap out for Intel and especially Nvidia and it would ring just as true.

Though, you awake the impression of someone being disinclined towards AMD, in me.

Maybe to a degree i would expect from a Fan of the other companies.

Just miss the time when flagship MSDT/HEDT CPUs only cost 400/1000$.

well, i would agree if i could actually miss those times.

Though i guess everything is better then what we have right now in the GPU market.