Hi,

I am currently running a vmWare environment at home using vSan for shared storage and Horizon for VDI. I also have a bunch of VMs running (<20).

This is licensed via VMUG so 200 bucks a year, my vsan is based on All-Flash (P3700 & P320h as cache, 750's as storage), currently 3, soon to be 4 nodes.

Now I know that hyperconverged is primarily targeting enterprise users where its more important to have a consistent and equal distribution of performance to a larger user base opposed to peak performance of a few users.

I am aware that given the low node &disk amount (1 cache+1 capacity/node) the most I can get is 1 disk's worth of performance, but at least for write I am not even getting that.

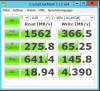

I have run a few tests (I know CDM is not really the best tool for this, its just for illustrating the difference between a local ssd and the vsan).

Its the same VM moved to different hosts (with different cpus and cache drives)

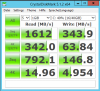

The local drive on a 26667 (2 cores):

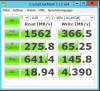

Vsan based vm on the same box, with a P320h:

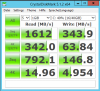

Vsan based vm on a 2630L with a P3700

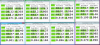

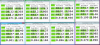

And lastly, to test whether this really might be due to the multi user design, 8 concurrent CDM runs on the 2667/P320h (with 8 cores). Note that CDM did not run all in parallel, it grouped as marked with a delay of maybe 15 secs total. That means the last CDM run finished 15 secs after the first one, so some tests were not running in parallel, at some point there was mixed read/write going on.

Yes I know, "run a proper test using fio" or such

I can live with the current setup, but I wonder whether one of the alternative options would provide more individual performance than vmWare/vsan. O/C its totally possible that my (unsupported HW) setup is just not well built and this is just a config issue.

I am currently running a vmWare environment at home using vSan for shared storage and Horizon for VDI. I also have a bunch of VMs running (<20).

This is licensed via VMUG so 200 bucks a year, my vsan is based on All-Flash (P3700 & P320h as cache, 750's as storage), currently 3, soon to be 4 nodes.

Now I know that hyperconverged is primarily targeting enterprise users where its more important to have a consistent and equal distribution of performance to a larger user base opposed to peak performance of a few users.

I am aware that given the low node &disk amount (1 cache+1 capacity/node) the most I can get is 1 disk's worth of performance, but at least for write I am not even getting that.

I have run a few tests (I know CDM is not really the best tool for this, its just for illustrating the difference between a local ssd and the vsan).

Its the same VM moved to different hosts (with different cpus and cache drives)

The local drive on a 26667 (2 cores):

Vsan based vm on the same box, with a P320h:

Vsan based vm on a 2630L with a P3700

And lastly, to test whether this really might be due to the multi user design, 8 concurrent CDM runs on the 2667/P320h (with 8 cores). Note that CDM did not run all in parallel, it grouped as marked with a delay of maybe 15 secs total. That means the last CDM run finished 15 secs after the first one, so some tests were not running in parallel, at some point there was mixed read/write going on.

Yes I know, "run a proper test using fio" or such

I can live with the current setup, but I wonder whether one of the alternative options would provide more individual performance than vmWare/vsan. O/C its totally possible that my (unsupported HW) setup is just not well built and this is just a config issue.