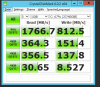

What are realistic read/write numbers from VSAN? I know this is a *big* it depends.

My setup

3x HP DL325 7402P 64GB - each host with 1x NVME 1725b, namespace split into 5 (600GB cache, 590GB Capacity)

LINK - VMware vSAN + NVMe namespace magic: Split 1 SSD into 24 devices for great storage performance

Since this is a 3 node system, my understanding is I have a FT=1 (Can only loose 1 node, but then I have no rebuild ability) - This makes it 2x Mirrored + 1x Witness?

So, if I'm running 10gbe network - and I have a VM doing writes - getting 500MB is good? Does this VM have to write to 2 other nodes for its mirrored aka FT=1 - therefore write bandwidth is split between 2 nodes - or am I completely off?

2x 500MBs connections = 1GBs(which maxes out the 10gbe network).

I think if I can figure out LACP on esxi (not having luck so far) - I could get to 1GBs writes (Doubled-ish)

I'm thinking to scale correctly Ito go to a 40gbe switch and maybe a 4th node to get the most out of these speeds?

My setup

3x HP DL325 7402P 64GB - each host with 1x NVME 1725b, namespace split into 5 (600GB cache, 590GB Capacity)

LINK - VMware vSAN + NVMe namespace magic: Split 1 SSD into 24 devices for great storage performance

Since this is a 3 node system, my understanding is I have a FT=1 (Can only loose 1 node, but then I have no rebuild ability) - This makes it 2x Mirrored + 1x Witness?

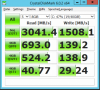

So, if I'm running 10gbe network - and I have a VM doing writes - getting 500MB is good? Does this VM have to write to 2 other nodes for its mirrored aka FT=1 - therefore write bandwidth is split between 2 nodes - or am I completely off?

2x 500MBs connections = 1GBs(which maxes out the 10gbe network).

I think if I can figure out LACP on esxi (not having luck so far) - I could get to 1GBs writes (Doubled-ish)

I'm thinking to scale correctly Ito go to a 40gbe switch and maybe a 4th node to get the most out of these speeds?