I have two NAS builds with an H310 HBA (IT mode), ASRock E3C224 mobo, i3-1241v3 CPU, 32GB RAM, Chenbro NR40700 chassis, Mellanox Connectx-3 CX312A 10GbE NICs, and 24x HGST NAS 4TB drives (software RAID6). I just migrated from a Supermicro SC846 chassis with the SAS2 backplane which exhibited the same problem I'm having.

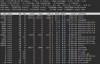

When performing a data transfer between the servers (10GbE networking through Ubiquiti ES-16-XG switch), I'm finding that data transfers over SMB on Openmediavault top out at about 480MBps, when, considering the setup, I'd expect closer to 700MBps transfer. iPerf between the servers pretty much saturates the 10GbE connection, and making a local file transfer between the RAID6 array and ramdisk on each server doesn't get much faster than 480MBps.

I doubt it's the Mellanox NICs with iPerf saturating 10GbE, but could it be the NICs and they need to be tuned?

I also doubt it's the backplanes, since I've exhibited the same speeds on my SM SAS2 backplane and my new Chenbro backplanes. I should note that each SAS port on my H310 connects to each CB3 port on the Chenbro backplanes.

I'd like to figure out if I can optimize data speeds, but I'm also curious if I'm going to encounter the same issue when I move to FreeNAS/ZFS in a couple months with 10TB drives.

Ideas?

When performing a data transfer between the servers (10GbE networking through Ubiquiti ES-16-XG switch), I'm finding that data transfers over SMB on Openmediavault top out at about 480MBps, when, considering the setup, I'd expect closer to 700MBps transfer. iPerf between the servers pretty much saturates the 10GbE connection, and making a local file transfer between the RAID6 array and ramdisk on each server doesn't get much faster than 480MBps.

I doubt it's the Mellanox NICs with iPerf saturating 10GbE, but could it be the NICs and they need to be tuned?

I also doubt it's the backplanes, since I've exhibited the same speeds on my SM SAS2 backplane and my new Chenbro backplanes. I should note that each SAS port on my H310 connects to each CB3 port on the Chenbro backplanes.

I'd like to figure out if I can optimize data speeds, but I'm also curious if I'm going to encounter the same issue when I move to FreeNAS/ZFS in a couple months with 10TB drives.

Ideas?