Dear STH community, let me introduce my Fun Build also known as Storage (and wallet  ) Ripper.

) Ripper.

Build’s Name: Fun Build aka StorageRipper

Operating System: Windows 10 LTSC

Chassis: Fractal Design 7 XL, seems to be pretty popular choice for TR Pro builds

CPU: ThreadRipper Pro3975WX -> 5975WX

CPU cooler:Thermalright Silver Arrow TR4 -> Noctua NH-U14S TR4-SP3 with second fan

Motherboard: ASUS Pro WS WRX80E-Sage SE WIFI

RAM: 256GB or 8 x Kingston Server Premier DIMM 32GB, DDR4-3200, CL22-22-22, ECC,KSM32ED8/32ME -> KSM32ED8/32HC, OCed to 3600@CL16

GPU: ASUS TUF Gaming GeForce RTX 3070 V2 OC (0.8k), just to have some moderately-power-consuming GPU

IOPs drives: 3 x Intel Optane P5800x 1.6TB, one for OS and two for data

Main storage and bandwidth drives: 32 x Samsung PM1643a SAS 12G 3.84TB

RAID controller: Microchip (Adaptec) SmartRAID Ultra 3258P-32i, 32 ports, 3258UPC32IXS

Enclosures/backplanes for PM1643a drives: 4 x IcyDock MB508SP-B

Cabling: 4 x SlimSASx8-2MiniSAS-HDx4-0.8M (2304900-R) + a couple of SFF-8643 to U.2 from aliexpress

PSU: Seasonic Prime PX-1300, 1300W

UPS: APC SMT1500IC

Usage Profile: I work as a scientist, and from time to time I deal with 3D geometries and 3D flow fields sizing from ~1GB to few TBs per file. These files are simulation output from supercomputing facilities. I.e., Fun Build is targeted for their processing and storage, as well as for development of simulation software but not for the simulation itself. I/O operations include sequential read and sometimes write with modifications of those files using own C-codes or third-party software. For this task 32 x PM1643a are targeted. In addition, I also deal with 3D computed-tomography scans of real geometries ranging from hundreds to tens of thousands of .tif files per geometry. Here a single file size ranges from ~0.1 to ~5 MB. Development and execution of reconstruction algos require simultaneous reading and writing of those files, for which Optanes were installed.

The Fun Build:

Some highlights during installation and testing:

I will post performance numbers a bit later.

I will post performance numbers a bit later.

Build’s Name: Fun Build aka StorageRipper

Operating System: Windows 10 LTSC

Chassis: Fractal Design 7 XL, seems to be pretty popular choice for TR Pro builds

CPU: ThreadRipper Pro

CPU cooler:

Motherboard: ASUS Pro WS WRX80E-Sage SE WIFI

RAM: 256GB or 8 x Kingston Server Premier DIMM 32GB, DDR4-3200, CL22-22-22, ECC,

GPU: ASUS TUF Gaming GeForce RTX 3070 V2 OC (0.8k), just to have some moderately-power-consuming GPU

IOPs drives: 3 x Intel Optane P5800x 1.6TB, one for OS and two for data

Main storage and bandwidth drives: 32 x Samsung PM1643a SAS 12G 3.84TB

RAID controller: Microchip (Adaptec) SmartRAID Ultra 3258P-32i, 32 ports, 3258UPC32IXS

Enclosures/backplanes for PM1643a drives: 4 x IcyDock MB508SP-B

Cabling: 4 x SlimSASx8-2MiniSAS-HDx4-0.8M (2304900-R) + a couple of SFF-8643 to U.2 from aliexpress

PSU: Seasonic Prime PX-1300, 1300W

UPS: APC SMT1500IC

Usage Profile: I work as a scientist, and from time to time I deal with 3D geometries and 3D flow fields sizing from ~1GB to few TBs per file. These files are simulation output from supercomputing facilities. I.e., Fun Build is targeted for their processing and storage, as well as for development of simulation software but not for the simulation itself. I/O operations include sequential read and sometimes write with modifications of those files using own C-codes or third-party software. For this task 32 x PM1643a are targeted. In addition, I also deal with 3D computed-tomography scans of real geometries ranging from hundreds to tens of thousands of .tif files per geometry. Here a single file size ranges from ~0.1 to ~5 MB. Development and execution of reconstruction algos require simultaneous reading and writing of those files, for which Optanes were installed.

The Fun Build:

Some highlights during installation and testing:

- I tried to overclock “the system”, but there is totally no way. CPU is non-overclockable by AMD while memory overclocking is not supported on this mobo, as asus support replied. I.e., there are frequency settings above 1600MHz (or 3200), but any small step above this frequency results in no boot. At all. I tightened timings a little bit to 18-19-18-52-74-1T@1.3V. And if there is no overclocking, let me do undervolting) I reduced Vcore and cpu SOC voltage via offset by 0.1V and 0.25V, respectively.

- The fan noise is moderate-to-low, also at full load (Prime95).

- 120mm fan F1 at ~1200RPM is enough to cool RAID controller (<55-57C under load), north bridge, and Optane (<35C). Room temp is ~24C.

- GPU is supported by a small stand S1

- Rear fan is off, seems to be unnecessary.

- Bottom cables are planned to be kept as shown (out of the case), the front case door will not be installed

- For better cooling of PM1643a’s, I attached rubber legs to three IcyDock enclosures to create gaps and add more air flow between enclosures from front 140mm fans. Those 140mm fans cool 32 x PM1643a and 2 x P5800x. PM1643a temperatures in 39-41C range. The default front door of Define 7 XL was removed: its closing increases SSDs temperature by ~15C.

- Due to PWDIS sata “feature”, I need to tape 3rd power pin on all 32 SAS SSDs in order to make them ON inside IcyDock enclosures.

- Plastic divider in the front frame was removed to enable 140mm fans outside installation:

- It seems that there is a mistake in WRX80E-Sage SE WIFI manual, two U.2 ports support PCIe 4.0 opposite to 3.0 stated. IOPS and bandwidth benchmarks for cable-connected Optanes are identical to the ones installed in PCIe slot. If not those two U.2 ports, I do not know how I would connect 2xP5800x. I bought several m.2 -> u.2 adapters (+ SFF-8643 to U.2 cables) from aliexpress expecting possible issues, and, as expected

, two -different- adapters did not work at all with P5800x on my system. Occupying PCIe slots is also not as straightforward: placing already installed single Optane one slot above dropped its bandwidth four times while HWiNFO64 was still reporting PCIe 4.0 connectivity with correct payload size. Additionally, the presence of two more Optanes in PCIe slots would obscure air flow from F1 fan, which is so needed for the raid controller.

- “The tower” out of four IcyDocks with 32xPM1643a weighs about 10kg. (Whole build is >30kg). And while installed, it slightly bends plastic plates under it. To prevent this bending, I added some soft, low-memory material under those plates over the edges of metal box with Optanes:

- Aliexpress cables are shorter than branded-ones (2304900-R), but they resulted in a larger number of non-medium error counts in SSDs smart.

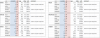

- Power consumption according to SMT1500IC display: ~120W idle without PM1643a SSDs, ~280W with SSDs idle; +150W under IO load, +220W under Prime95 Small FFTs but without IO load. -> After CPU replacement and memory overclocking add 80W to those numbers.

Last edited: