For those who care, I have almost 1000 iperf3 data points between 4 machines on my network running 10 Gbe in a spreadsheet at

docs.google.com

docs.google.com

All 4 boxes have Aquantia AQN-107 x4 NICs.

I test every host combination from 1 to 4 TCP streams, without jumbo frames, with 4KB jumbo frames, and 9KB jumbo frames. Also some tests measuring the impact for Energy-efficient-ethernet.

Tests are between 4 machines of various generations, which are described in the second sheet (click at the bottom).

One is as old as 2011 - AMD FX-8120 with a PCIe 2.0 bus. All others are PCIe 3.0 . The most recent CPU is a Ryzen 2700 from 2800.

I haven't fully analyzed them all the results - there are a hell of a lot of data points. Some could be erroneous due to manual entry. Each column takes about 20 minutes to start and run, and a few more to enter the data.

So far, I can see that :

1) the two switches, one consumer, one pro, don't appear to be slowing anything down, as most tests with recent machines and multiple streams get close to line rate

2) jumbo frames aren't helping with single stream tests, which can never saturate the network. Actually, jumbos seem to be hurting throughput in many single stream tests, which I thought odd. Jumbos do help with multiple streams .

3) None of the tests appear to be strictly bound on the PCIe bus. Even the year 2011 PCIe 2.0 box, BUMBLEBEE, achieves 9.79 Gbps in a few of the tests.

Data that isn't in the spreadsheet : CPU usage. I didn't try to record that. I can say that the NICs behave wildly differently between these 4 machines, that have 4 different CPUs and chipsets. Sender always uses less CPU due to way LSO works and some of the TCP decoding cannot be fully offloaded. I read a paper about it over the weekend while waiting for some tests to complete.

Things I still want to test :

- the various "interrupt moderation" settings. It is left at the default in all these tests. On Windows, that's "Enabled: Adaptive". On Linux, I believe it is "Disabled".

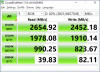

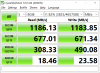

- disk writes. I do have one real drive that performs beyond 10 Gb/s. It is a 5 x 1TB Samsung 860 SSD EVO. Crystaldiskmark local test attached. Userbenchmark always tells me it detected a cached drive, but that's not the case ... Remote test not done yet.

I guess this drives justifies 25 Gbe, right ?

10 GBASE-T Ethernet performance in my home network

Tests Client,Server,Mode,Streams,Gbps,Gbps,Gbps,Gbps,Gbps,Gbps,Gbps,Gbps,Gbps,Gbps,Slowest,Fastest EEE,off,off,off,off,on,on,off,off,on,on Jumbo frames,off,off,4KB,4KB,4KB,4KB,9KB,9KB,off,9KB Notes,Run #1,Run #2,Run #1,Run #2,Run #1,Run #2,Run #1,Run #2 HIGGS,BUMBLEBEE,Receiving,1,7.16,7.53,7.16...

All 4 boxes have Aquantia AQN-107 x4 NICs.

I test every host combination from 1 to 4 TCP streams, without jumbo frames, with 4KB jumbo frames, and 9KB jumbo frames. Also some tests measuring the impact for Energy-efficient-ethernet.

Tests are between 4 machines of various generations, which are described in the second sheet (click at the bottom).

One is as old as 2011 - AMD FX-8120 with a PCIe 2.0 bus. All others are PCIe 3.0 . The most recent CPU is a Ryzen 2700 from 2800.

I haven't fully analyzed them all the results - there are a hell of a lot of data points. Some could be erroneous due to manual entry. Each column takes about 20 minutes to start and run, and a few more to enter the data.

So far, I can see that :

1) the two switches, one consumer, one pro, don't appear to be slowing anything down, as most tests with recent machines and multiple streams get close to line rate

2) jumbo frames aren't helping with single stream tests, which can never saturate the network. Actually, jumbos seem to be hurting throughput in many single stream tests, which I thought odd. Jumbos do help with multiple streams .

3) None of the tests appear to be strictly bound on the PCIe bus. Even the year 2011 PCIe 2.0 box, BUMBLEBEE, achieves 9.79 Gbps in a few of the tests.

Data that isn't in the spreadsheet : CPU usage. I didn't try to record that. I can say that the NICs behave wildly differently between these 4 machines, that have 4 different CPUs and chipsets. Sender always uses less CPU due to way LSO works and some of the TCP decoding cannot be fully offloaded. I read a paper about it over the weekend while waiting for some tests to complete.

Things I still want to test :

- the various "interrupt moderation" settings. It is left at the default in all these tests. On Windows, that's "Enabled: Adaptive". On Linux, I believe it is "Disabled".

- disk writes. I do have one real drive that performs beyond 10 Gb/s. It is a 5 x 1TB Samsung 860 SSD EVO. Crystaldiskmark local test attached. Userbenchmark always tells me it detected a cached drive, but that's not the case ... Remote test not done yet.

I guess this drives justifies 25 Gbe, right ?

Attachments

-

55.9 KB Views: 7