Hello everybody,

Have been reading for some time and have now signed in and looking forward to exchanging about our server hobby. Sorry for any weird language as english is not my native tounge.I am just setting up a small “playserver” homelab environment for hyper-v. Server is a supermicro based box with a dual core haswell 3,2 Ghz pentium, 8 GB ram and 2 on board intel NICs (plus IPMI). I have installed Windows Server 2012 R2 Standard with hyper-v role onto a software mirror on two very old laptop drives as I have read that the management OS does not need high IOPS or disk bandwidth. This software mirror is host drive c:

Furthermore I have 4 very old 1TB WD green drives, set up as a 2-way mirror storage space with 2 columns, wich is kinda like a raid 10. That SS mirror is host drive F: where all VHDX files are located.

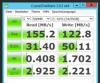

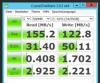

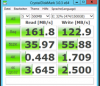

When benchmarking the SS drive F: from within the physical host (the server R2 standard with hyper-v role enabled), I get the following results:

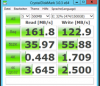

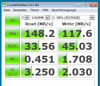

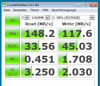

Not that bad for 7 years old 5400 rpm consumer drives. Then I have set up a generation 2 VM with Windows server 2012 R2 with the essentials role enabled. This VM has a C: drive vhdx for the OS and an E: drive vhdx for the data. Both vhdx files reside on the SS mirror. When benchmarking the drives from within the virtual R2 server I get the following results:

Drive C: OS

Drive E: DATA

A certain loss for virtualisation, but acceptable none the less.

I have teamed the two host NICs and connected the team to a virtual external switch. VMs are connected to this one and only virtual external switch. The team is switch dependent and I have configurated my GS110TP switch accordingly.

When benchmarking from an external physical client like a laptop connected to my switch onto a fileshare on the virtual servers E: drive, I get very slow results:

This is a loss of performance of up to 66%! The cpu runs appr. At 65-75% within the VM and 15-45% on the hyper-v host while benchmarking.

I have set up a second VM which is a Generation 1 Windows 7 pro VM with only 1 OS disk, which is drive C:. The VHDX also resides on the host´s F: drive which is the SS mirror. When measuring from within the WIN 7 VM, I get the following results:

Somewhat comparable to the data of the server VM and OK. But when I benchmark a fileshare on the virtual server from the win 7 VM, bandwidth totally drops to unacceptable levels:

And now comes the weird part: I have set up a fileshare on the hyper-v host on the F: drive, where the VM files are located in order to check what happens when I access the fileshare on the physical host from the win 7 VM. That way I hoped to see if the problem is low disk performance or low network performance. I get the following results:

Now I am not shure what to think and where to look. I was of the opinion that putting 4 old drives in a striped mirror would give me enough power to “almost” saturate a gigabit network in most cases. But somewhere in this setup the performance gets lost and I do not know where……I know the hardware is very weakish…….but can this be the only reason? I could just solve the problem with money, better hardware and SSDs, but inmho this does not make sense without understanding what is happening in the first place.

Any tips or insight regarding this issue…. ?

Have been reading for some time and have now signed in and looking forward to exchanging about our server hobby. Sorry for any weird language as english is not my native tounge.I am just setting up a small “playserver” homelab environment for hyper-v. Server is a supermicro based box with a dual core haswell 3,2 Ghz pentium, 8 GB ram and 2 on board intel NICs (plus IPMI). I have installed Windows Server 2012 R2 Standard with hyper-v role onto a software mirror on two very old laptop drives as I have read that the management OS does not need high IOPS or disk bandwidth. This software mirror is host drive c:

Furthermore I have 4 very old 1TB WD green drives, set up as a 2-way mirror storage space with 2 columns, wich is kinda like a raid 10. That SS mirror is host drive F: where all VHDX files are located.

When benchmarking the SS drive F: from within the physical host (the server R2 standard with hyper-v role enabled), I get the following results:

Not that bad for 7 years old 5400 rpm consumer drives. Then I have set up a generation 2 VM with Windows server 2012 R2 with the essentials role enabled. This VM has a C: drive vhdx for the OS and an E: drive vhdx for the data. Both vhdx files reside on the SS mirror. When benchmarking the drives from within the virtual R2 server I get the following results:

Drive C: OS

Drive E: DATA

A certain loss for virtualisation, but acceptable none the less.

I have teamed the two host NICs and connected the team to a virtual external switch. VMs are connected to this one and only virtual external switch. The team is switch dependent and I have configurated my GS110TP switch accordingly.

When benchmarking from an external physical client like a laptop connected to my switch onto a fileshare on the virtual servers E: drive, I get very slow results:

This is a loss of performance of up to 66%! The cpu runs appr. At 65-75% within the VM and 15-45% on the hyper-v host while benchmarking.

I have set up a second VM which is a Generation 1 Windows 7 pro VM with only 1 OS disk, which is drive C:. The VHDX also resides on the host´s F: drive which is the SS mirror. When measuring from within the WIN 7 VM, I get the following results:

Somewhat comparable to the data of the server VM and OK. But when I benchmark a fileshare on the virtual server from the win 7 VM, bandwidth totally drops to unacceptable levels:

And now comes the weird part: I have set up a fileshare on the hyper-v host on the F: drive, where the VM files are located in order to check what happens when I access the fileshare on the physical host from the win 7 VM. That way I hoped to see if the problem is low disk performance or low network performance. I get the following results:

Now I am not shure what to think and where to look. I was of the opinion that putting 4 old drives in a striped mirror would give me enough power to “almost” saturate a gigabit network in most cases. But somewhere in this setup the performance gets lost and I do not know where……I know the hardware is very weakish…….but can this be the only reason? I could just solve the problem with money, better hardware and SSDs, but inmho this does not make sense without understanding what is happening in the first place.

Any tips or insight regarding this issue…. ?