I thought that switching from NFS to iSCSI would provide increase performance for datastores on ESXI.

I just started looking at migrating from NFS to iSCSI on a 40gbe network with jumbo frames.

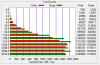

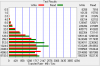

I ran a very simple benchmark, and I didn't expect it, but NFSv4 was faster than NFSv3 which was in turn faster than iSCSI (see below).

So I'm reevaluating whether to pursue iSCSI.

The file server is Ubuntu 18.04 DL380 Gen8 providing storage to ESXI 6.7 DL 360 Gen9 hosts.

The network is IPv4 and jumbo frames using 40Gbe ConnectX-3 Pro cards connected to an Arista DCS-7050QX.

The datastore is a zfs pool of 24 vdevs consisting of mirrored pairs (48 10KRPM 6Gb/s SAS drives) in two D2700 enclosures, each with a single connection to an HP H241 controller.

Send and receive buffers are tuned for 40gbe, but other than that, the tuning is pretty much default.

To measure the performance, I ran an ATTO disk benchmark in a Windows 10 VM. The same VM was mounted via NFSv3, NFSv4 and iSCSI, to obtain the results below. The same datastore was used for all three. For the iSCSI the VM's disk was migrated to a zvol in the same zfs pool, mounted via iSCSI.

What do you all think?

iSCSI:

NFSv3:

NFSv4.1:

I just started looking at migrating from NFS to iSCSI on a 40gbe network with jumbo frames.

I ran a very simple benchmark, and I didn't expect it, but NFSv4 was faster than NFSv3 which was in turn faster than iSCSI (see below).

So I'm reevaluating whether to pursue iSCSI.

The file server is Ubuntu 18.04 DL380 Gen8 providing storage to ESXI 6.7 DL 360 Gen9 hosts.

The network is IPv4 and jumbo frames using 40Gbe ConnectX-3 Pro cards connected to an Arista DCS-7050QX.

The datastore is a zfs pool of 24 vdevs consisting of mirrored pairs (48 10KRPM 6Gb/s SAS drives) in two D2700 enclosures, each with a single connection to an HP H241 controller.

Send and receive buffers are tuned for 40gbe, but other than that, the tuning is pretty much default.

To measure the performance, I ran an ATTO disk benchmark in a Windows 10 VM. The same VM was mounted via NFSv3, NFSv4 and iSCSI, to obtain the results below. The same datastore was used for all three. For the iSCSI the VM's disk was migrated to a zvol in the same zfs pool, mounted via iSCSI.

What do you all think?

iSCSI:

NFSv3:

NFSv4.1:

Attachments

-

9.1 KB Views: 3

-

9.4 KB Views: 3

-

9.4 KB Views: 3