Perfect....short depth less than 20", would be out of this world.For my part, an ideal server would be a 3U chassis with 12 3.5" bays in the bottom 2U and 8 2.5" SATA/SAS/NVMe bays on the top. I'd pay good money for something like that, or like what you were describing, either would be very nice.

Designing 1U/2U/3U/4U rackmount server chassis - these will be going to production - looking for input, ideas, and feedback!

- Thread starter SligerCases

- Start date

Notice: Page may contain affiliate links for which we may earn a small commission through services like Amazon Affiliates or Skimlinks.

Noted on this, could some serious rack density with such a layout.I don't buy 3U precisely because you end up with some waste at the top that usually has room for a slim optical and controls or front-panel ports.

But, I'd buy what you describe in a heartbeat.

Only thing I am skeptical on is the slim optical. Seem like that might be fairly niche, particularly if front has 10Gbps USB C?

#1 Yes - I am all about leaving space for cable management. One of the biggest gripes I have with some of the cases I bought to test out / see what sucks is that there were several places where I had to smash and pry cables into tight spots. (Clearly whoever designed these, didn't actually try to build in them.)The location of psu: PSU cables don't come out at a 90degree angle. Is there enough space between where psu cables come out, where power and data cables go to the yellow drive area, that the fit is not "horrible"?

Plan to offer multiple depths of cases as well, so if he 17" deep version is too short for a very long ATX PSU, can go with a 20" version to give that extra room between the PSU and drives.

Can you post some pictures of this? Not sure which Rosewill case you're speaking of, but some of them have very questionable airflow to begin with.Would an array of 7200 or sas ssd's have enough airflow around them to stay cool if they abut the psu like that? I've had to rig some special fan setups in my Rosewill to cool sas 2.5 hdd's/ssd's.

If uploading here is a PITA can you email me?

Awesome info!@SligerCases

Thank you so much for addressing the niche "home" rack market!

I've been looking for such cases for years!

Please consider making a slightly deeper 4U case, with mounting points for 360mm radiator and also pump/reservoir. Mounting points for water distribution/manifold with quick disconnect (so you can service the CPU and GPU blocks independently). Also, it would be nice to have cutouts at the back of the case for quick disconnect bulkheads and/or for cable passthrough.

My current setup:

I have 3x Silverstone RM502. I drilled holes at the back for quick disconnect fittings, which I run to an external 420x420mm radiator.

The RM502 being only 468mm deep is really cramped once you put in all the fans, tubing, and power cables, I'm afraid that the airflow to my passive components is restricted.

I'd like to see a slightly deeper case, 600mm-650mm to allow for cable management and allow more room at the front top for more 5.25" or other expansion bays.

Nice to haves:

A painted interior.

A top panel that can be removed toolessly.

I would like to point out the Alphacool ES 4U. It looks like great case, although it does not come with sliding rails, which is disappointing.

Server Racks

1HE, 2HE, 3HE und 4HE Serverracks, speziell für die Integration von Wasserkühlung konzipiert. Ideal für effiziente Kühlung in Serverumgebungen.www.alphacool.com

I know a 5U would be even more niche, but it would allow for the height of standard waterblocks for consumer cards (some are too tall to fit into 4U). You can also have front facing PCIe (with riser cables) also.

Sorry for the ramble. Looking forward to these cases, I hope you ship to Australia!

I have two options that are pretty close, but you might need something even bigger than these.

I have 20" deep 4U that is targeted for thick 360mm radiator + push/pull fan configuration:

Then I have a 25" deep 4U that supports 8x 5.25" bays at front, with a tool-less fan bracket in the middle for mounting a 360mm radiator:

The motherboard tray on these cases slides out a bit to make building easier too if that helps with your fits?

(Also has some pretty cool internal server rack brushes on sides of the fan bracket for ducting /cable management!)

Both of these are possibly too small though...I am thinking something ~30" deep, to allow really thick radaitor, push/pull fans, EATX motherboard, and keep the same 8x 5.25" bay config?

Do you have pictures of your build you could post?

Can you also send me the models of distro-blocks, and the radiator?

I have! Will have to do another thread about this, as it's pretty unique.Keep in mind, most of these solutions are for home use with at most a single rack for solutions that are racked.

Have you thought about a model dedicated to being mounted on the wall? (Look, we all do it, no one is judging anyone.)

Have you put any thought into a form factor similar to the Synology DS2422+? I've really only seen similar NAS cases that go up to 8 bays. 12 (or better yet 16) bays would be ideal so long as it can take an ITX motherboard and has room for a single expansion slot.

I guess I wasn't clear.Only thing I am skeptical on is the slim optical.

What I meant was that most 3U systems have that small amount of space at the top that is often used for a control panel or slim optical, which I consider a monumental waste of real estate. This is because they don't have the mix of 2.5" and 3.5" drives, and run 4x 3.5" drives in each column, and I don't know if the space above is even enough for a 2.5". If it is, a 3U with 16x 3.5" and 5x 2.5" would be OK, too.

I'm still more of a 2U or 4U guy, as those have exact fits for both 3.5" (horizontal) and 2.5" (vertical), and you could in theory mix and match in 4 "blocks" of disks, where a block would hold 2x 3.5" or 6x 2.5".

Yep! Will get some rackmount NAS cases going first, then some SFF desktop NAS cases like you describe are up next.Have you put any thought into a form factor similar to the Synology DS2422+? I've really only seen similar NAS cases that go up to 8 bays. 12 (or better yet 16) bays would be ideal so long as it can take an ITX motherboard and has room for a single expansion slot.

I ran a poll in r/homelab about 3 months ago when I couldn't find any rack case that would fit in my small downtown house (the data room is only 32" deep) and was going to try to get protocase to design one. Overwhelmingly everyone seemed to want a short depth chassis, especially for a NAS.

In that same line, front I/O was much more important than hotswap drives. I've been trying to get access to Supermicro front I/O short depth chassis to see how they actually feel, but they've been out of stock for the better part of a year and a half.

Finally, I'm trying to either combine my two open-bench NAS servers in one larger rack case, or place them in two SFF rack cases.

The top line PRD for the larger case was:

What are you using to layout your case design? Solidworks or AutoCAD?

In that same line, front I/O was much more important than hotswap drives. I've been trying to get access to Supermicro front I/O short depth chassis to see how they actually feel, but they've been out of stock for the better part of a year and a half.

Finally, I'm trying to either combine my two open-bench NAS servers in one larger rack case, or place them in two SFF rack cases.

The top line PRD for the larger case was:

- 3U Chassis split into two levels, mobo + psu in upper level, hdds in lower level

- Fits an ATX/EATX board like a Tyan S8030GM2NE or AsRock EPC621D8A

- IEC C14 inlet to C13 Passthrough cable for PSU from front left of chassis to rear of chassis

- Front I/O, mobo mounted to left of chassis (just right of C14 inlet)

- PSU mounted at rear of chassis behind PCIe cards.

- 2x2 PCIe risers

- 16x 3.5" mounted in drawer under mobo in a 4x2x2 configuration (WxDxH)

- 3x 120mm rear mounted fans

What are you using to layout your case design? Solidworks or AutoCAD?

Last edited:

Definitely dying for some short-depth rackmount solutions in the future.

I couldn't find any when I was re-doing my lab that worked out for me, so I ended up with 3U and 2U silverstone HTPC cases on a shelf =P

In my case, the side to side airflow works out well since I have them pushed back against the wall.

-- Dave

I couldn't find any when I was re-doing my lab that worked out for me, so I ended up with 3U and 2U silverstone HTPC cases on a shelf =P

In my case, the side to side airflow works out well since I have them pushed back against the wall.

-- Dave

@SligerCases your designs look top notch. I love the face plate design you have that cna cover either hot swappable drives or intake fans. I've been searching the end of the interwebz for a shorter 4u that can accomodate 140mm fans in front and a 12" GPU (RTX3080). NO external 5.25" needed or hot swappable drive access. I have seen the NORCO RPC-431 modded to fit 3 140mm across the front with relative ease so I know 4u cases can fit them. I'm currently running the really long RSV-L4000, I just don't need two row of fans.

** After checking your website I do see the CX4150a and CX4170a matches very closely but with 120mm fans. Looks like the powerbutton and usb ports would have to be relocated to make room for 140mm fans. Maybe a dual design or a swappable plate for 120mm and 140mm fans. One can only wish right.

*** What kind of filters setups have you seen people use with your cases?

** After checking your website I do see the CX4150a and CX4170a matches very closely but with 120mm fans. Looks like the powerbutton and usb ports would have to be relocated to make room for 140mm fans. Maybe a dual design or a swappable plate for 120mm and 140mm fans. One can only wish right.

*** What kind of filters setups have you seen people use with your cases?

Last edited:

New to the thread, and liking what I see!

My only comment, which seems you are doing, is to make the chassis have 8 PCIe expansion slots!

That's been the biggest gripe from all the server chassis I have, that I can't fit/hang a GPU off of the last slot!

On a personal note, I'm after lots of HDD storage (24, 36, 56, etc). However, there was a time I wanted a silent HTPC shiny chassis for my audio rack.

Short depth is needed for those projects because most audio racks are, well, short depth.

My only comment, which seems you are doing, is to make the chassis have 8 PCIe expansion slots!

That's been the biggest gripe from all the server chassis I have, that I can't fit/hang a GPU off of the last slot!

On a personal note, I'm after lots of HDD storage (24, 36, 56, etc). However, there was a time I wanted a silent HTPC shiny chassis for my audio rack.

Short depth is needed for those projects because most audio racks are, well, short depth.

I originally was thinking 3x 140mm fans at the front, but as you said, very little room left for power button/USB/etc.@SligerCases your designs look top notch. I love the face plate design you have that cna cover either hot swappable drives or intake fans. I've been searching the end of the interwebz for a shorter 4u that can accomodate 140mm fans in front and a 12" GPU (RTX3080). NO external 5.25" needed or hot swappable drive access. I have seen the NORCO RPC-431 modded to fit 3 140mm across the front with relative ease so I know 4u cases can fit them. I'm currently running the really long RSV-L4000, I just don't need two row of fans.

** After checking your website I do see the CX4150a and CX4170a matches very closely but with 120mm fans. Looks like the powerbutton and usb ports would have to be relocated to make room for 140mm fans. Maybe a dual design or a swappable plate for 120mm and 140mm fans. One can only wish right.

*** What kind of filters setups have you seen people use with your cases?

I also read some review on newer 120mm fans vs 140mm fans, and the new Noctua A12 and Phanteks T30 are so close to performance of 140mm fans (or in some cases, better) that I decided 3x 120mm > 2x 140mm.

I am working hard on the hot swap drive feature. Lots of good ideas in this thread for what people are looking for with those.

The current cases are able to just drop in filter material between the front panel and subfront. Can really use anything that you can cut to size. I took a carbon pre-filter out of my standing air filter, cut it down, and stuck it on one of the 3Us to test. Worked pretty good. Also working on magnetic filters from Demci-filter!

Glad that 8th slot is getting recognized! I had a ton of people who do GPU builds ask for that. Can't believe so many other companies over looked doing that, particularly these days.New to the thread, and liking what I see!

My only comment, which seems you are doing, is to make the chassis have 8 PCIe expansion slots!

That's been the biggest gripe from all the server chassis I have, that I can't fit/hang a GPU off of the last slot!

On a personal note, I'm after lots of HDD storage (24, 36, 56, etc). However, there was a time I wanted a silent HTPC shiny chassis for my audio rack.

Short depth is needed for those projects because most audio racks are, well, short depth.

I am still working on the NAS cases. That drive requirement - would that need to be front loading, or could it be top loading?

I am really thinking that something like the 45 drives cases for DIY builders is something I am going to do. It's also the only way I see to do that many drives with any real density.

Do you mind linking me what those cases are, and a rough idea of what specs you got on each?Definitely dying for some short-depth rackmount solutions in the future.

I couldn't find any when I was re-doing my lab that worked out for me, so I ended up with 3U and 2U silverstone HTPC cases on a shelf =P

In my case, the side to side airflow works out well since I have them pushed back against the wall.

-- Dave

It's a very impressive setup considering what you're working with.

Can you link me that poll? Would be very useful info!I ran a poll in r/homelab about 3 months ago when I couldn't find any rack case that would fit in my small downtown house (the data room is only 32" deep) and was going to try to get protocase to design one. Overwhelmingly everyone seemed to want a short depth chassis, especially for a NAS.

In that same line, front I/O was much more important than hotswap drives. I've been trying to get access to Supermicro front I/O short depth chassis to see how they actually feel, but they've been out of stock for the better part of a year and a half.

Finally, I'm trying to either combine my two open-bench NAS servers in one larger rack case, or place them in two SFF rack cases.

The top line PRD for the larger case was:

If you could design a rackmount case along those lines, that would be amazing! (and saving me all that time having to relearn solidworks and beg my old uni profs to get access to the uni's workshop)

- 3U Chassis split into two levels, mobo + psu in upper level, hdds in lower level

- Fits and ATX/EATX board like a Tyan S8030GM2NE or AsRock EPC621D8A

- IEC C14 inlet to C13 Passthrough cable for PSU from front left of chassis to rear of chassis

- Front I/O, mobo mounted to left of chassis (just right of C14 inlet)

- PSU mounted at rear of chassis behind PCIe cards.

- 2x2 PCIe risers

- 16x 3.5" mounted in drawer under mobo in a 4x2x2 configuration (WxDxH)

- 3x 120mm rear mounted fans

What are you using to layout your case design? Solidworks or AutoCAD?

Interesting to hear on front IO. I've been working on some 2U, 3U, and 4U layouts for front IO - but I wasn't sure how popular it'd be. I also had several 1U designs, but those are all motherboard specific.

Drawer for the HDDs might be expensive is my only concern on this specific setup, but it is an interesting layout. Could it possibly be done via a 1U DAS and a 2U compute node instead?

I am using Siemens Solid Edge - I love it.

No kidding! And thank you for the 8-slots! Here's an idea to keep in mind with Epyc/Rome server-type builds: there is no chipset. This means we are basically free to install the GPU in any slot we see fit. For servers, this is especially nice to precisely control the air flow throughout the chassis, fans, M.2, NVMe drives, etc. Sure, you can do this for Intel and other AMD chipsets: but they have to be particular about which exact slot they can use.Glad that 8th slot is getting recognized! I had a ton of people who do GPU builds ask for that. Can't believe so many other companies over looked doing that, particularly these days.

I mention this because I have an idea that I haven't seen any other case designer do. And the fact that you are thinking like that, I'll give you this idea to consider. Always wanted to design a chassis myself...

The idea is about inserting other system components into that GPU area, that would normally clash in those PCIe slots next to the CPU (you need 2x or 3x slot widths for GPUs!!). Think, 16 or 20 drive bays. Think, water cooling component locations, rads, etc.

The idea is that the builder could move the GPU, say, to the very side/top/bottom of the chassis - into the last slot. Then you have all of that room between the GPU and CPU cooling area (which is pretty low with AIOs).

Obviously, that's a very small use case. But, maybe... maybe something modular.

NAS 4U for a rack? To me, it doesn't matter as homelabber. You tell the family, "hey, no one can watch TV for a while", shutdown, and just pull the beast out the rack.I am still working on the NAS cases. That drive requirement - would that need to be front loading, or could it be top loading?

I am really thinking that something like the 45 drives cases for DIY builders is something I am going to do. It's also the only way I see to do that many drives with any real density.

To let ya know a few existing designs: A front-loader that has pretty-much maxed out all capacity you get get would be the Supermicro SC846 at 24-bays. That's, 24x3.5" in the front fills the entire front (and rear) areas.

The Supermicro SC847 is a much more interesting chassis to study though, especially if one was to use a 2U riser to mount a GPU sideways?! Search for pics of 36-bay AND 45-bay variants. NOTE: the 36-bay variant only has room for 1/2-height PCIe cards and CPU coolers. Whereas the 45-bay variant has zero room for any motherboard what-so-ever.

As for connectivity, you don't need to have a backplane for "Front-Loader" types like this. The drives can slip in and have their asses exposed for connections.

If you are open to playing with different form factors, you could investigate cramming an mATX or ITX into a 36, 45, etc-bay build! That would make for a fairly compact design.

Now, for Top-Loaders... In top loaders, I've seen 45, 60, and even 90-bay systems. However, I think that would be a fairly difficult design, mainly because of the tight-clearance from placing a drive vertically. This is why manufactures like Supermicro and HP have created very large PCB "backplanes" that are nothing more than a 90-degree right-angle of power and SAS connector (and now even U.2!) of where the drive is inserted.

That is, unless we can find an endless supply of dirt cheap PCB SAS-connector backplanes?

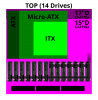

Also, about the max you can cram vertically and have plenty of air-flow is 15x 3.5" drives, side-by-side. So each row is 14 or 15 drives (some top loaders use 14-bay rows). if you want some inspirations, look at the Supermicro 60-bay as it has a little room for an ATX if you design your own chassis (90-bay chassis cannot fit any motherboard!).

The Cisco C3260 is another interesting chassis to study as a top-loader. You can think of replacing the rear two nodes with, say, an mATX or ITX board.

Good luck!

Last edited:

The cases are:Do you mind linking me what those cases are, and a rough idea of what specs you got on each?

It's a very impressive setup considering what you're working with.

Top left: Silverstone 380 for the xeon-d 1541 FreeNAS server which runs a dozen VMs plus 40TB raw storage connected with onboard 10gig.

Inside:

The 4U cases on the right are Silverstone GD09B: SILVERSTONE Black Grandia GD09B HTPC Case - Newegg.com

They each run a 750w PSU, xeon 2648Lv2 10-core CPU, 2.4TB of PCI-e flash, 10gige networking, and 128GB of DRAM.

Inside:

The 2U is a Silverstone ML04B: SILVERSTONE Black ML04B Micro ATX Media Center / HTPC Case - Newegg.com

This also houses a 550W PSU, xeon-d 1541 8-core CPU, 128GB of DRAM, onboard 10gig networking, and 3.2TB of pci-e flash storage.

Inside:

-- Dave

Last edited:

^^ This.......I'm running one of their magnetic filters in the front of my Rosewill case as we speak and def helps and easy to clean. Covers the entire width even the usb ports and power/reset buttons. Totally blacks out the front of the case.I originally was thinking 3x 140mm fans at the front, but as you said, very little room left for power button/USB/etc.

I also read some review on newer 120mm fans vs 140mm fans, and the new Noctua A12 and Phanteks T30 are so close to performance of 140mm fans (or in some cases, better) that I decided 3x 120mm > 2x 140mm.

I am working hard on the hot swap drive feature. Lots of good ideas in this thread for what people are looking for with those.

The current cases are able to just drop in filter material between the front panel and subfront. Can really use anything that you can cut to size. I took a carbon pre-filter out of my standing air filter, cut it down, and stuck it on one of the 3Us to test. Worked pretty good. Also working on magnetic filters from Demci-filter!

Are you designing one that fits out on the very outside or directly over the fans? interested in what your plans are.

Bummed to hear no plans for jamming 3 x 140mm fans and putting the power button/usb port at the very extreme corner (subtle hint hehehe)

Though I guess I could get a blank aluminum plate custom cut to fit around magnetic face plate holders and rivet it on. Would then give me freedom to cut my own fan holes, button, and usb ports.

I've been buying / installing a lot of new stuff into older 1U-4U GPU and cheap ebay cases lately so I'll offer what I look for as a consumer. Overall I think you're trying to do too many things and need to focus on a niche and offer something unique there.

Benchmarks to consider:

Density: 3-4 GPUs per 1U for 2 slot workstation cards (4 node 4U servers typically store 12 two slot GPUs and some 1U servers can store 4 apiece). 2-3 GPUs per 1U for commercial cards (depends a lot on the size / model.) For workstations you may be competing against lower density due to noise considerations.

Cost: $200-300ish per case is competitive I think if you have a good design, maybe more depending on quality (but people are cheap). For PC gamers they'll want even cheaper (I can pickup a mitx case at microcenter for $50 that'll fit a 5950X and RTX 3080 for example).

Other

Airflow: I've run into issues with airflow running commercial ryzen motherboards in 1U cases because except for the X470D4U, the RAM modules on all commercial boards are basically rotated 90 degrees compared to server motherboards and this typically blocks a lot of your overflow through the case. A 2U design can help with this (I have a 3900X on an 85W TDP rated cooler that runs ok in a 2U with additional fans, but a 1U is much more challenging.) A 3U case I believe would let people run the wraith prism coolers so that's a benefit over 1U/2U. It might also help accommodate some of the gigantic commercial GPUs.

ATX vs mATX/ITX: Maybe it's better to throw away the idea of ATX support for the smaller cases. I've always noticed trade-offs with one or the other, and if you support both you get the worst of both worlds. If you're targeting large volume and cheap prices this could work, but if you want to make high quality niche products I think it'll work against you.

Power Supplies: I don't know how easy/possible this would be, but what about adding a removable/optional bracket to allow for installation of server PSUs? That can help improve airflow and lower cost for consumers since things like the Ablecom PWS-521-1H PSU are available cheaply on the used market. One of the better designs I saw in a server case was to have a removable bracket behind the PSU that mounted into a hole in bottom. Since you'd need to be flexible with multiple types of PSUs maybe a slot cut out behind where they would generally end would work? That plus some sort of bracket on the outlet seems like it would secure them in place well enough. This could allow you to do things like mount the hard drives above the PSU instead of at the front of the case, allowing for more fans or something.

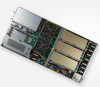

Horizontal GPU mounting: I see this a lot and it also works pretty well, especially when you're not dealing with a 4U case. Attached is an example of a 1U 4 GPU server. Since these are generally custom they can fit a 2 socket motherboard in there along with the drive bays and stuff. If you wanted to make a similar 1U or 2U case you could maybe fit a matx motherboard and PSU in there at the loss of 1 GPU space which would be compelling for people that want to run commercial hardware in a similar size footprint. It wouldn't be as efficient density-wise but could be a lot cheaper to setup on your own. A 1U size severely limits you to basically 2 slot professional GPUs and some commercial models but a 2U case lets you either stack two professional GPUs on top of each other or potentially 1 large consumer GPU on its own.

Generally I've found supermicro systems to be pretty well designed. You can look to them for ideas of cases that work with ATX/matx motherboards. For example, their 4U GPU servers are basically just a giant box that fits 8 GPUs. They look a little longer than yours but that allows them to put the motherboard in the middle, improve density, and provide better airflow.

Benchmarks to consider:

Density: 3-4 GPUs per 1U for 2 slot workstation cards (4 node 4U servers typically store 12 two slot GPUs and some 1U servers can store 4 apiece). 2-3 GPUs per 1U for commercial cards (depends a lot on the size / model.) For workstations you may be competing against lower density due to noise considerations.

Cost: $200-300ish per case is competitive I think if you have a good design, maybe more depending on quality (but people are cheap). For PC gamers they'll want even cheaper (I can pickup a mitx case at microcenter for $50 that'll fit a 5950X and RTX 3080 for example).

Other

Airflow: I've run into issues with airflow running commercial ryzen motherboards in 1U cases because except for the X470D4U, the RAM modules on all commercial boards are basically rotated 90 degrees compared to server motherboards and this typically blocks a lot of your overflow through the case. A 2U design can help with this (I have a 3900X on an 85W TDP rated cooler that runs ok in a 2U with additional fans, but a 1U is much more challenging.) A 3U case I believe would let people run the wraith prism coolers so that's a benefit over 1U/2U. It might also help accommodate some of the gigantic commercial GPUs.

ATX vs mATX/ITX: Maybe it's better to throw away the idea of ATX support for the smaller cases. I've always noticed trade-offs with one or the other, and if you support both you get the worst of both worlds. If you're targeting large volume and cheap prices this could work, but if you want to make high quality niche products I think it'll work against you.

Power Supplies: I don't know how easy/possible this would be, but what about adding a removable/optional bracket to allow for installation of server PSUs? That can help improve airflow and lower cost for consumers since things like the Ablecom PWS-521-1H PSU are available cheaply on the used market. One of the better designs I saw in a server case was to have a removable bracket behind the PSU that mounted into a hole in bottom. Since you'd need to be flexible with multiple types of PSUs maybe a slot cut out behind where they would generally end would work? That plus some sort of bracket on the outlet seems like it would secure them in place well enough. This could allow you to do things like mount the hard drives above the PSU instead of at the front of the case, allowing for more fans or something.

Horizontal GPU mounting: I see this a lot and it also works pretty well, especially when you're not dealing with a 4U case. Attached is an example of a 1U 4 GPU server. Since these are generally custom they can fit a 2 socket motherboard in there along with the drive bays and stuff. If you wanted to make a similar 1U or 2U case you could maybe fit a matx motherboard and PSU in there at the loss of 1 GPU space which would be compelling for people that want to run commercial hardware in a similar size footprint. It wouldn't be as efficient density-wise but could be a lot cheaper to setup on your own. A 1U size severely limits you to basically 2 slot professional GPUs and some commercial models but a 2U case lets you either stack two professional GPUs on top of each other or potentially 1 large consumer GPU on its own.

Generally I've found supermicro systems to be pretty well designed. You can look to them for ideas of cases that work with ATX/matx motherboards. For example, their 4U GPU servers are basically just a giant box that fits 8 GPUs. They look a little longer than yours but that allows them to put the motherboard in the middle, improve density, and provide better airflow.

Attachments

-

260.6 KB Views: 25

-

71.2 KB Views: 28

-

992.4 KB Views: 27

Here you go: www.reddit.com/r/homelab/comments/sm3ig2/would_you_prefer_a_halfwidth_or_fullwidth_nvme/Can you link me that poll? Would be very useful info!

The drawer is actually based on an earlier Netflix Open Connect appliance with a little bit of serviceability. Essentially the drawer is nothing more than a piece of sheet metal sitting on a pair of chassis rails. The drawer then just had 2.5"/3.5" generic HDD cages screwed into it.Drawer for the HDDs might be expensive is my only concern on this specific setup, but it is an interesting layout. Could it possibly be done via a 1U DAS and a 2U compute node instead?

To service it, you would unscrew the faceplate. pull out the drawer, unscrew whichever cage you wanted, and pull it out.

It's a super low-tech solution for a middle ground between cost, connection flexabilty, and serviceability, and density. My motivation for is that it allows me to used U.2 NVMe drives in the same environment as 3.5" drives without having to shell out for a barebones with an NVMe chassis. I could just route the appropriate cables down to the basement and be golden.

The only important thing to note for anyone trying to use longer cables for NVMe is that you need to use a redriver card, if not a retimer card when routing cables from the PCIe card at the front of the chassis, to the back of the chassis, down to the basement, then back to the front. That run length is usually between 50cm-100cm which is longer than the PCIe 4.0 spec allows for without a retimer/redriver card.

The response I've gotten when I've had to architect for those systems was that we should switch to a high-density GPU barebones instead (both Gigabyte and Supermicro have decent options, or trying negotiate a small Inspur or Wiwynn order). Most of vendors use risers to deliver the density in the rear and side of the chassis, and I thin the reasoning is that if you're paying for more than one GPU, especially something in the A-series, a GPU focused barebones is a rounding error at that point. Most of them have enough space for 6x 2-slot GPUs which will pretty much saturate an EPYC platform.Glad that 8th slot is getting recognized! I had a ton of people who do GPU builds ask for that. Can't believe so many other companies over looked doing that, particularly these days.

My personal preference is always for risers with flexible cables as it allows you to plan out airflow and PCIe placement without having to care about which mobo the you end up with.

I'll grab a trial. I like the fact that they still have perpetual license options. PLM sofware has a 10 year lifecycle so I don't know why anyone would get a subscription instead.I am using Siemens Solid Edge - I love it.

If you're going for quiet, even 3x 120mm fans in a 4U is really pushing it as far a noise. Although fans blow air axially, they pull air entirely from the sides, or perimeter.@SligerCases...140mm fans...

There's a few quick and easy experiments to observe this. First, cover the intake of an open fan with a flat surface and then move the surface away from the fan about a 3/4" to 1". Even though the axial direction is blocked, the air flow is not impeded because the intake is drawing air from the perimeter.

A second experiment is to cup the perimeter of the intake of an open fan with your hands or use four surfaces to block the perimeter. Flow decreases, turbulence and noise increases, even though nothing is blocked axially.

A third experiment, use a Halloween smoke machine to observe the air flow being drawn from the intake perimeter. Then place 3x fans inline right next to each other and use the smoke to observe the flow. Air is only being drawn from the perimeter of the entire assembly, not from axially nor from between the fans. Then space the fans about an inch apart, and you'll observe the smoke begin to also flow in between each of the fans, creating 25% more air flow efficiency, and less intake turbulence and noise.

Also the intake side is where all the noise is, so it's important to maximize the air space around each fan intake. I've not yet seen this dynamic recognized in quiet PC industry.

The Sliger rack mount enclosures are the best designs I've seen after searching for years.

If you're planning to do a Sliger 5U, I find that 2x 140mm fans spaced apart is the most quiet configuration in a 5U HTPC home build I did recently. I've since converted that project to strictly NAS and am considering a Sliger 4U CX4150a for the HTPC. The simplistic industrial design is very attractive.

However, I've always envisioned something a bit more sleek/cosmetic for high end A/V and home theater racks.. An entirely glass front surface, black, semi-transparent 25%, so you can see illuminated motherboards and components only when powered on. Air drawn in from a 140mm fan on each side of the enclosure.

If you're planning to do a Sliger 5U, I find that 2x 140mm fans spaced apart is the most quiet configuration in a 5U HTPC home build I did recently. I've since converted that project to strictly NAS and am considering a Sliger 4U CX4150a for the HTPC. The simplistic industrial design is very attractive.

However, I've always envisioned something a bit more sleek/cosmetic for high end A/V and home theater racks.. An entirely glass front surface, black, semi-transparent 25%, so you can see illuminated motherboards and components only when powered on. Air drawn in from a 140mm fan on each side of the enclosure.

Last edited:

@JBOD JEDI you sort of nailed the reason for going to 140mm. You increase the outside edge surface area that air mainly gets pulled along. If you keep the total volume of air constant and only increase the size of the fan, then you get lower fan rpms and lower velocity. All of which equate to less noise at the same air volume being pushed.

I'm still very tempted in buying one of his 4u 3 Fan configurations as there great looking cases and I don' t need the massively long rosewill case I'm in now (2 walls of fans)

I'm still very tempted in buying one of his 4u 3 Fan configurations as there great looking cases and I don' t need the massively long rosewill case I'm in now (2 walls of fans)

Ah I see your point. Since the design intends to be compatible with water-cooling, the 3x 120mm fans can't be separated anyhow, and so may as well go with 3x 140mm.

However, because the fans are "inset" into the enclosure in this design, at 3x 140mm in a 4U, the perimeter is encroaching on the enclosure top and bottom wall surfaces, leaving 5/8" air space. The efficiency gains really begin to kick in at 3/4" to 1" spacing. So it would be cutting it close, losing some flow efficiency. Worse are the sides, even when removing the power button and USB ports, 3x140mm would leave no gap between the fan assembly and enclosure sides. In experiments I've done, the air gap obstructions greatly exceed the benefit of reduced RPM with larger fans. Some fans are a little different than others but generally no gap just makes a lot of swooshing sound with little air flow actually exiting the fan.

The exception would be if the fans were to be mounted flush against the front of the enclosure, so that no wall surfaces are blocking the perimiter of the fan assembly. This would actually be the most ideal optimization of air flow and minimized RPM/blade speed.

I myself have not seen a scenario in which I needed more air flow than 3x 120mm or 2x 140mm fans produce at silent speeds.

However, because the fans are "inset" into the enclosure in this design, at 3x 140mm in a 4U, the perimeter is encroaching on the enclosure top and bottom wall surfaces, leaving 5/8" air space. The efficiency gains really begin to kick in at 3/4" to 1" spacing. So it would be cutting it close, losing some flow efficiency. Worse are the sides, even when removing the power button and USB ports, 3x140mm would leave no gap between the fan assembly and enclosure sides. In experiments I've done, the air gap obstructions greatly exceed the benefit of reduced RPM with larger fans. Some fans are a little different than others but generally no gap just makes a lot of swooshing sound with little air flow actually exiting the fan.

The exception would be if the fans were to be mounted flush against the front of the enclosure, so that no wall surfaces are blocking the perimiter of the fan assembly. This would actually be the most ideal optimization of air flow and minimized RPM/blade speed.

I myself have not seen a scenario in which I needed more air flow than 3x 120mm or 2x 140mm fans produce at silent speeds.

Last edited:

In addition to using a CX4150a/i for HTPC, I'm considering modifying another CX4150a/CX4170a/CX4200a for NAS.

I've lost my appeal for backplanes in a 24/7 NAS because they consume energy, require caddies, restrict air flow, and I'm at the point I've no need for hot swap capability.

The open design of these enclosures makes it super easy to weld in some guide rails for retaining hard drives in a top-down NAS configuration. The top down configuration has grown on me because there is zero restriction of air flow across the drives, as all of the SATA and power connectors are up and above the air flow path.

Other notes:

SATA power connectors would be DIY with push-in pass-through connectors 7 drives per rail.

The Deepcool AK620 is testing better than the Noctua NH-D15 and thus better than the recommended NH-D12L, and costs less than either. It's 160mm high but the top cover can be removed for a height of 157mm. May just need to slide the fans down a fin to fit within 158mm required by the case.

The Noctua chromax.black fans don't have that funky brown & beige color.

I've lost my appeal for backplanes in a 24/7 NAS because they consume energy, require caddies, restrict air flow, and I'm at the point I've no need for hot swap capability.

The open design of these enclosures makes it super easy to weld in some guide rails for retaining hard drives in a top-down NAS configuration. The top down configuration has grown on me because there is zero restriction of air flow across the drives, as all of the SATA and power connectors are up and above the air flow path.

Other notes:

SATA power connectors would be DIY with push-in pass-through connectors 7 drives per rail.

The Deepcool AK620 is testing better than the Noctua NH-D15 and thus better than the recommended NH-D12L, and costs less than either. It's 160mm high but the top cover can be removed for a height of 157mm. May just need to slide the fans down a fin to fit within 158mm required by the case.

The Noctua chromax.black fans don't have that funky brown & beige color.

Last edited: