Hey,

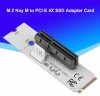

So this is what I'm talking about: https://smile.amazon.com/Sintech-NGFF-NVME-WiFi-Cable/dp/B07DZF1W55/

I know x1 PCIe lanes is lame compared to x4, but hear me out: I want to build a cluster out of 5 Dell 7050 micros I have, I got a bunch of their motherboards for $10 each during the pandemic and have been slowly piecing them together as I have the resources since.

Fast forward to today, and I'm about to start putting them in a 4U hardcase rack sideways with 3x120mm fans blowing on them, so something like a card with a ribbon cable would work fine - I'm leaving the tops off the cases because of their thermal "design", ahem, in fact, the only reason I'm using the bottom of the cases is because of their proprietary CPU heatsink retention system, but I digress...

The thing about any of these micro computers is they're light on upgrade options. I could use the x4 slot for NVMe like they're intended, but I was thinking of getting a PCIe adapter and running RDMA-compatible 10GbE cards like Chelsio T520-CR, or something from Broadcom or Marvell that are in the ESXi HCL.

Actually, now that I think about it, 10GbE is essentially 1250MB/s max (via math, not reality) so that is actually closer to x1 PCIe 3.0 lane, 985MB/s, than an m.2 NVMe, which can get upwards of 3,500MB/s sequential. So maybe using the wifi slot (m.2 x1 E-key or A+E key) would make more sense for the storage networking infra than using the NVMe slot.

But the point was, I'm never going to use this WiFi slot for WiFi, I'd like to use it for either a 10GbE card or x4 NVMe. Has anyone tried one of these A+E-key to M-key adapters? I imagine they're pretty obscure...

I was just thinking of using the WiFi slot for NVMe instead of the NVMe slot since x1 is only 1/4 of x4, while the 10GbE cards are x8, so x1 is a much more dramatic reduction, but then when considering the throughput of an actual 10GbE card... Why do they need to be x8 again? Duplex?

Ok this is long and rambling, but if you were me, what would you do? Use the x4 slot for an x8 card, and the x1 slot for an x4 NVMe, or use the NVMe slot for it's intended purpose, and use x1 slot for 10GbE?

So this is what I'm talking about: https://smile.amazon.com/Sintech-NGFF-NVME-WiFi-Cable/dp/B07DZF1W55/

I know x1 PCIe lanes is lame compared to x4, but hear me out: I want to build a cluster out of 5 Dell 7050 micros I have, I got a bunch of their motherboards for $10 each during the pandemic and have been slowly piecing them together as I have the resources since.

Fast forward to today, and I'm about to start putting them in a 4U hardcase rack sideways with 3x120mm fans blowing on them, so something like a card with a ribbon cable would work fine - I'm leaving the tops off the cases because of their thermal "design", ahem, in fact, the only reason I'm using the bottom of the cases is because of their proprietary CPU heatsink retention system, but I digress...

The thing about any of these micro computers is they're light on upgrade options. I could use the x4 slot for NVMe like they're intended, but I was thinking of getting a PCIe adapter and running RDMA-compatible 10GbE cards like Chelsio T520-CR, or something from Broadcom or Marvell that are in the ESXi HCL.

Actually, now that I think about it, 10GbE is essentially 1250MB/s max (via math, not reality) so that is actually closer to x1 PCIe 3.0 lane, 985MB/s, than an m.2 NVMe, which can get upwards of 3,500MB/s sequential. So maybe using the wifi slot (m.2 x1 E-key or A+E key) would make more sense for the storage networking infra than using the NVMe slot.

But the point was, I'm never going to use this WiFi slot for WiFi, I'd like to use it for either a 10GbE card or x4 NVMe. Has anyone tried one of these A+E-key to M-key adapters? I imagine they're pretty obscure...

I was just thinking of using the WiFi slot for NVMe instead of the NVMe slot since x1 is only 1/4 of x4, while the 10GbE cards are x8, so x1 is a much more dramatic reduction, but then when considering the throughput of an actual 10GbE card... Why do they need to be x8 again? Duplex?

Ok this is long and rambling, but if you were me, what would you do? Use the x4 slot for an x8 card, and the x1 slot for an x4 NVMe, or use the NVMe slot for it's intended purpose, and use x1 slot for 10GbE?