Not sure whats your goal here (information, advertisement, discussion), but I'd wonder

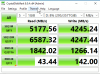

Plain discussion only and ofc curiosity for additional input to improve. What buffled me on today's test run are the 40µs latency, which made me like: ooook, just post on sth and see for the opinion of others.

Apart from that, eraraid is free for up to 4 drives - so its an option for any home server.

- how a single drive compares against this

Good question - gotta see, once I can test it.

- how regular OS software raid [mdadm, windows software raid] compares against this

Way worse, but I didnt screenshot those several days ago. mdadm improved in read speed in this years update. Write speed sucks still with mdadm.

- how other raid solutions (intel vroc [not on thios board o/c]) compare

Sequential large file reads perform quite similar over solutions - up to -30%, but at 30GB/s, which is more theoretical, it doesnt matter. In real world applications, quite close to the 3rd screenshot - that is where solutions break usually.

-What the use case is? Secure storage for a single VM Host?

In my case it's twofold:

- Ahigh end block I/O workstation for graphics in general, DTP and video editing (burst high IO usage).

- High end network shares for directSMB.

-How networked performance looks like (i.e. how much do you loose in a distributed setup)

Thats the next part - I already have tests and setups, but not yet satisfied to show. The server is connected at 100G to the network - my goal (hope) is to saturate 100G with single-threaded, synchronous loads of large files (10GB - 2TB size).

-Whats the caching strategy of Raidix? Memory? What happens on powerloss?

Caching can be configured to some extent for eraraid. Generally I see transaction oriented continous reads, while writes happen in bursts. In these test cases its direct (raw) virtio access of the VM on eraraid. If you like, I can see for a more complete answer.